Network and Distributed System Security Symposium 2026

The Network and Distributed System Security (NDSS) Symposium is a leading venue for the exchange of ideas between researchers and practitioners in network and distributed system security. It places strong emphasis on practical security challenges, particularly the design and implementation of real-world systems. The symposium serves as a key platform for presenting and discussing the latest advances in Internet security, making it an essential forum for keeping up with developments at the forefront of the field.

At the NDSS 2026, the study “Chasing Shadows: Pitfalls in LLM Security Research” was presented. Co-authored by an international team of researchers, including Philipp Normann and Daniel Arp (both at TU Wien), the work explores one of the fastest-growing areas in cybersecurity, the use of large language models (LLM).

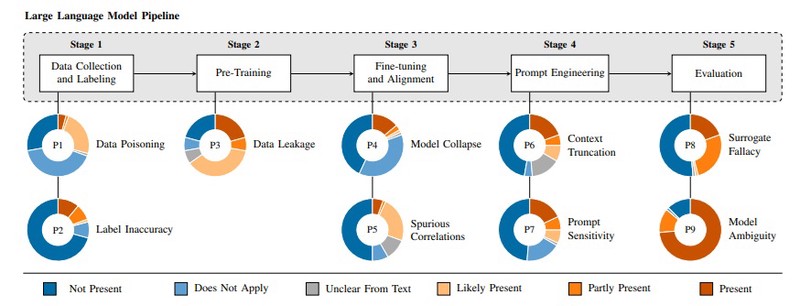

The study addresses a central challenge faced by the research community as LLMs become increasingly integrated into cybersecurity tasks such as vulnerability detection, secure code generation, and automated analysis. While these models offer powerful new capabilities, their complexity and unique behavior introduce risks that can undermine the validity and reliability of scientific results. To better understand these challenges, the authors identify nine recurring pitfalls that affect how LLM based security research is conducted. These issues arise throughout the entire development process, including data collection, model training, prompting strategies, and evaluation. Some pitfalls are specific to LLMs, such as model collapse caused by training on synthetic data and unpredictable behavior due to prompt sensitivity. Others, including data leakage and spurious correlations, are well known from earlier machine learning research but become more severe and harder to detect in the context of large language models.

To assess how widespread these problems are, the researchers conducted a systematic analysis of 72 peer reviewed papers published at leading conferences in security and software engineering between 2023 and 2024. The results reveal that every paper contains at least one of the identified pitfalls, and that only a small fraction of these issues are explicitly acknowledged. This suggests that many of the underlying risks remain unrecognized, even in high quality research. The practical implications of these findings are demonstrated through a series of empirical case studies. The analysis shows that even minor methodological issues can significantly distort evaluation results, inflate reported performance, and reduce reproducibility. For example, data leakage can artificially improve model metrics, while limitations in context size may remove essential information and bias evaluation outcomes. In addition, variations in model configuration can lead to inconsistent and difficult to reproduce results.

Taken together, these findings underline the need for stronger methodological rigor in LLM based security research. As these models become more widely adopted, ensuring transparency, reproducibility, and robustness is critical to maintaining the credibility of the field. Without addressing these challenges, there is a risk that research may overestimate model capabilities or fail to capture important limitations. To support the community, the authors provide practical guidelines and recommendations aimed at improving research practices. These include clearer reporting of experimental setups, systematic validation of data, and more robust evaluation procedures.